LawFuel Power Briefing from LawFuel editors

AI-driven software stocks have slumped as investors suddenly re-price the risks and disruption posed by legal-focused models like Anthropic’s Claude.

But for law firms, this is a reset, not a retreat, in the legal AI market. The money is shifting from “AI at any price” to “AI that can survive the coming copyright and compliance storm”—and that is clearly where serious firms should now be focusing.

What Actually Happened in Markets

The numbers are staggering. On 3 February 2026, a Goldman Sachs basket of US software stocks sank 6% in a single session—its biggest one-day decline since April’s tariff-fueled selloff.

A parallel index of financial services firms tumbled almost 7%. The Nasdaq 100 Index fell as much as 2.4%.

The trigger? Anthropic released new AI automation capabilities targeting legal, sales, marketing, and data analytics—sectors previously thought insulated from AI disruption.

The carnage was immediate and global:

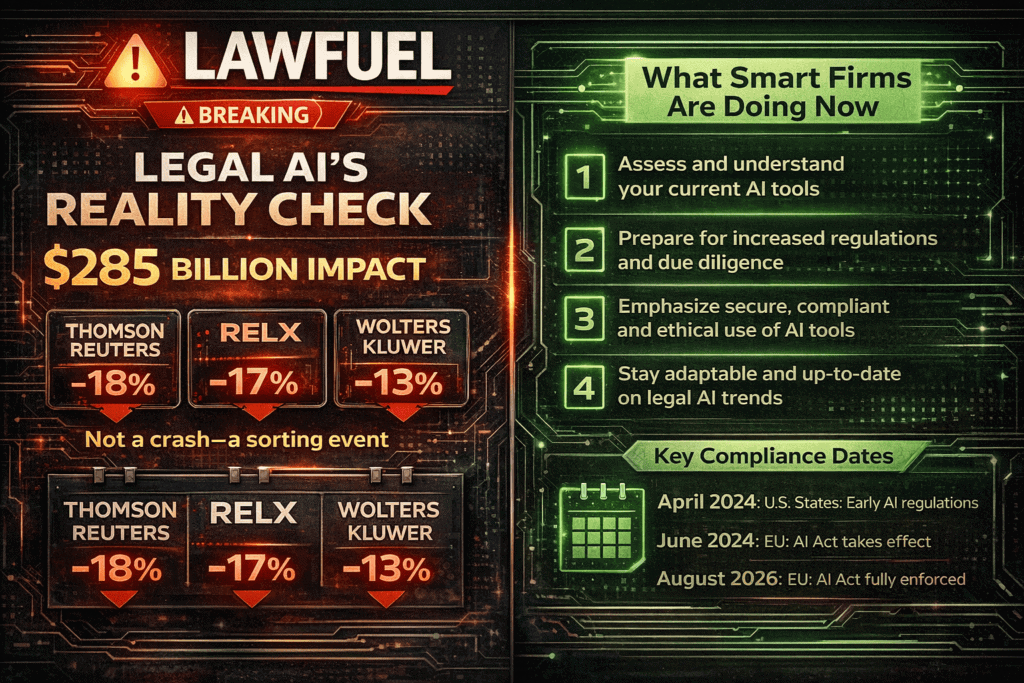

- Thomson Reuters crashed 18%—its worst single-day loss ever, pushing shares to levels not seen since June 2021. The stock is now down 33% year-to-date, compounding a 22% drop in 2025.

- RELX (owner of LexisNexis) plunged 17%—its biggest drop since 1988. The stock has now almost halved from its peak last February.

- Wolters Kluwer dropped 13%.

- LegalZoom tumbled nearly 20%.

The iShares Expanded Tech-Software Sector ETF (IGV) is now down about 28% from its recent high—officially in bear-market territory—and off roughly 17% year to date.

As Bloomberg reported, the selloff “wiped $285 billion” from stocks across software, financial services, and asset management sectors in a single trading day.

“Software sentiment is the worst ever,” according to a note from Jefferies. “It’s radioactive.”

The Legal AI Backdrop: Claude, Copyright and Compliance Risk

For lawyers, the headline isn’t “AI is over.” It’s that capital is moving away from generic software multiples and toward AI products that can prove defensibility, compliance, and genuine productivity gains.

Why? Because Anthropic—Claude’s developer—is simultaneously showing what legal AI can do while facing sustained intellectual property pressure that exposes the industry’s unresolved copyright tensions.

The $1.5 Billion Bartz Settlement

In September 2025, Anthropic agreed to pay $1.5 billion to settle Bartz v. Anthropic, a class action alleging the company downloaded over 7 million books from pirate sites like Library Genesis to train its Claude models.

In June 2025, Judge William Alsup issued a split decision: using lawfully purchased books for AI training was “quintessentially transformative” fair use—but downloading pirated copies was “inherently, irredeemably infringing.”

Key settlement details, via Ropes & Gray:

- $1.5 billion minimum payment (approximately $3,000 per work for ~500,000 copyrighted works)

- Anthropic must destroy the pirated libraries and confirm destruction in writing

- Settlement covers past conduct only—no immunity for future claims or allegedly infringing AI outputs

- Final approval hearing scheduled for April 2026

As Princeton University Press noted: “The Anthropic settlement affirms valuation of copyrighted work and provides an important legal precedent regarding unauthorized use of intellectual property.”

The New $3 Billion Music Publishers Lawsuit

The ink on Bartz was barely dry when a fresh lawsuit landed. On 29 January 2026, Concord Music Group, Universal Music Group, and ABKCO filed suit against Anthropic alleging mass piracy of over 20,000 copyrighted songs.

Per the complaint:

“While Anthropic misleadingly claims to be an AI ‘safety and research’ company, its record of illegal torrenting of copyrighted works makes clear that its multibillion-dollar business empire has in fact been built on piracy.”

The publishers allege that discovery in the Bartz case revealed Anthropic had illegally downloaded thousands more works than originally claimed. The lawsuit names CEO Dario Amodei and co-founder Benjamin Mann as defendants. Statutory damages could reach $3 billion—potentially one of the largest non-class action copyright cases in US history.

The EU AI Act: Compliance Becomes Financial Risk

Adding regulatory pressure, the EU AI Act reaches a critical milestone in August 2026 when full requirements for high-risk AI systems take effect—and AI systems used in legal services fall squarely within that category.

Key obligations, per K&L Gates:

- Penalties up to €35 million or 7% of global turnover for prohibited practices

- Mandatory conformity assessments and risk management systems

- Human oversight mechanisms must be operational

- Training data documentation and copyright compliance required

As Wilson Sonsini warns: “If your organization touches the EU market, the compliance clock is ticking.”

Put bluntly, the Claude era has made it impossible for serious buyers—including law firms—to ignore training data, copyright exposure, and vendor governance when they evaluate AI tools. That shift is now being priced into AI-exposed software stocks.

How This Fits the Broader Legal Market Story

Legal spend on AI and knowledge tools has been running far ahead of inflation. According to the 2026 Report on the State of the US Legal Market from Thomson Reuters and Georgetown Law:

- Technology spending grew 9.7% in 2025

- Knowledge management tools grew 10.5%

- These represent “the fastest real growth likely ever experienced in the legal industry”

But the same report sounds warning bells for 2026:

- Demand forecasts point toward contraction by Q3 2026

- Corporate net spend anticipation has dropped to levels not seen since the pandemic

- 90% of legal dollars still flow through billable hours—even as firms pour money into AI

As FindLaw observed: “Law firms have seen this movie before, and they should remember how it ends.”

Meanwhile, 26% of in-house teams expect to cut spending on law firms in 2026, even as hourly rates continue to climb. The message is clear: when in-house departments can use AI to handle routine work, there’s less reason to pay premium rates.

The LawFuel Take: Sorting Event, Not Crash

This is less a crash than a sorting event, separating legal AI that can withstand regulatory, copyright, and client scrutiny from vaporware sold on slide decks and conference buzz.

Consider what Morningstar noted in its analysis:

“Claude’s legal plug-in has nothing to do with legal research, which is the core value proposition and wide-moat foundation of Thomson and RELX’s legal businesses.”

And Artificial Lawyer makes a crucial point: Thomson Reuters, LexisNexis, and Wolters Kluwer are “legal data fortresses”—they’ve spent decades curating proprietary case law and contract data that AI tools can’t simply replicate.

The question isn’t whether AI disrupts legal technology. It’s which AI tools will survive the coming compliance storm—and which firms will be left holding expensive, undefensible subscriptions.

As National Law Review summarized: “Governance is no longer optional. Between the EU AI Act (August 2026), Colorado AI Act (June 2026), and proliferating state requirements, formalized AI policies have moved from best practice to compliance obligation.”

What Lawyers and Law Firms Should Be Doing Now

1. Treat the Sell-Off as a Due-Diligence Moment, Not an Exit

Use the current correction to stress-test every AI deployment and vendor. Key questions:

- What training data sources did the model use?

- What is the vendor’s copyright posture?

- What model governance and fallback processes exist?

- Can outputs be audited?

Build “data integrity” questions into your vendor selection and panel reviews, including explicit attestations that foundation models were not trained on pirated or clearly unlawful datasets.

2. Pivot from “AI Adoption” to “AI Defensibility”

Make validation and review the point of difference:

- Invest in human-in-the-loop checks and quality control layers

- Document workflows around AI outputs

- Track hard ROI metrics—faster turnaround on commodity work, improved win rates, better client reporting

As Stanford research found, error rates remain significant even for legal-specific tools: 17% for Lexis+ AI and 34% for Westlaw AI-Assisted Research. Over 700 court cases worldwide now involve AI hallucinations.

3. Rebalance Portfolios: From Big Bets to Targeted Tools

Shift experimentation budgets toward narrow, domain-specific legal AI—tools built around your practice’s documents, playbooks, and data—rather than undifferentiated general models.

Per Artificial Lawyer’s predictions:

“In 2026, ‘model quality’ becomes table stakes, and the differentiation moves to domain grounding, integrations, guardrails, and workflow execution.”

Where vendors are caught in the market downdraft, push for better pricing and clearer commitments on roadmaps, uptime, security, and compliance. The leverage has subtly moved toward sophisticated buyers.

4. Turn the AI Market Wobble into a Client-Facing Story

Use thought leadership, briefings, and client alerts to explain how your firm is responding to the AI correction:

- Tightening governance

- Upgrading tools

- Protecting clients against copyright and regulatory risk

For in-house counsel, this is a prime moment to:

- Revisit corporate AI policies

- Rein in “shadow AI” use by employees

- Align outside counsel guidelines with your own AI standards

Key Dates to Watch

| Date | Event | Implications |

|---|---|---|

| April 2026 | Bartz v. Anthropic final approval hearing | Precedent for AI training data liability |

| June 2026 | Colorado AI Act takes effect | US state-level AI compliance begins |

| August 2026 | EU AI Act high-risk obligations apply | €35M+ penalty exposure for non-compliance |

| March 2026 | Anthropic settlement claims deadline | Window closes for author/publisher claims |